1. Why is a statistical data-driven approach needed in dialogue systems?

A statistical data-driven approach is essential in modern dialogue systems primarily to overcome the severe limitations of traditional, rule-based architectures. While rule-based systems rely on rigid, hand-crafted scripts, they struggle to scale and often fail when encountering unexpected user inputs or conversational ambiguity.

Statistical approaches address these challenges by leveraging vast amounts of conversational data to learn and adapt autonomously. The key reasons they are necessary include:

-

Handling Uncertainty: Human conversation is inherently noisy, and speech recognition often introduces errors. Statistical models, particularly Partially Observable Markov Decision Processes (POMDPs), excel at managing this uncertainty. They maintain a probability distribution over multiple possible interpretations through Dialogue State Tracking, rather than relying on a single, easily broken assumption.

-

Automated Policy Optimization: Instead of manually coding every possible conversational turn, statistical systems use Reinforcement Learning (RL). By representing the dialogue as a Markov Decision Process (MDP), the system automatically learns an optimal Dialogue Policy through trial and error, maximizing long-term rewards like successful task completion.

-

Scalability: Because they learn dynamically from real-world data rather than human-engineered rules, statistical systems are significantly more scalable, adaptable to new domains, and resilient to varied user behaviors.

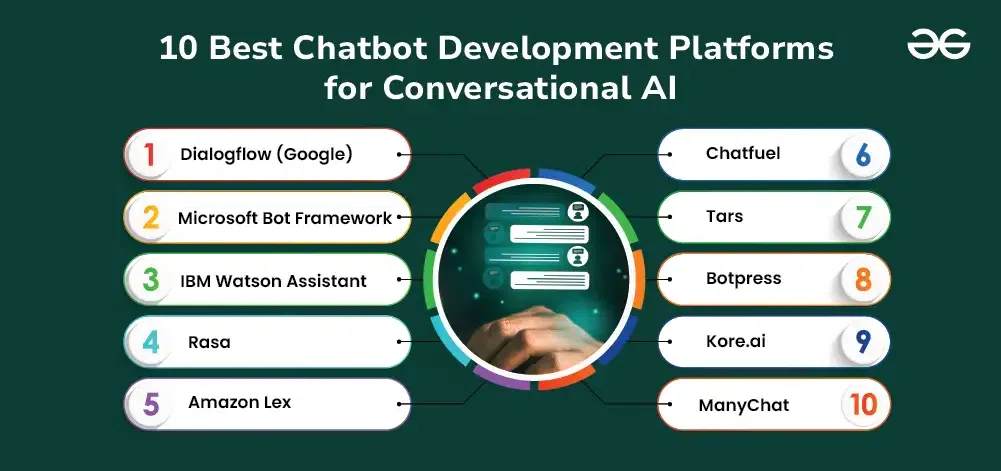

2. Discuss various tools and platforms used for developing dialogue systems

Tools and Platforms for Developing Dialogue Systems

Developing dialogue systems relies on a diverse ecosystem of tools, categorized by the system’s complexity and the developer’s technical background:

-

Visual Design and Prototyping Tools: Platforms like Google Dialogflow and Amazon Lex provide intuitive, drag-and-drop interfaces. They allow designers to map out conversational flows visually, making them ideal for prototyping rule-based or intent-driven systems without requiring deep programming expertise.

-

Advanced Frameworks and Toolkits: For greater control, open-source frameworks like Rasa are industry standards. Rasa empowers developers to build highly customized, machine-learning-based pipelines for Natural Language Understanding (NLU) and dialogue management. The Microsoft Bot Framework also offers robust SDKs for deploying complex bots across multiple digital channels.

-

Voice Assistant Platforms: Environments like the Alexa Skills Kit (ASK) provide the necessary APIs to build custom voice-driven dialogue systems. ASK is specifically designed for smart speakers and serves as the foundation for prominent academic competitions like the Alexa Prize.

-

Scripting Languages: For strictly handcrafted, rule-based systems, developers often utilize traditional markup languages like AIML (Artificial Intelligence Markup Language) or VoiceXML to explicitly script system responses and manage structured conversation flows.

3. Explain Dialogue Systems in detail

What are Dialogue Systems?

A dialogue system (often referred to as a conversational agent, virtual assistant, or chatbot) is a computer system designed to engage in natural language conversation with human users via text, speech, or multimodal interfaces. Its primary purpose is to seamlessly bridge the gap between complex machine operations and natural human communication.

These systems are broadly classified into two main categories:

-

Task-Oriented Systems: Designed to assist users in completing specific, well-defined goals, such as booking a flight, retrieving banking information, or controlling smart home devices.

-

Open-Domain Systems: Built for casual, unstructured conversation (chit-chat). Their goal is to maintain engaging, extended interactions without a specific endpoint.

The modeling and design of dialogue systems have evolved significantly over time. Early models were heavily rule-based, relying on rigid, hand-crafted scripts and pattern matching. To handle the unpredictability of human speech, the field shifted toward statistical data-driven approaches, utilizing probability and machine learning to manage dialogue flow. Today, the cutting edge relies on end-to-end neural dialogue systems, which use deep learning to process massive datasets and generate highly fluent, context-aware responses directly from user inputs.

4. Discuss how reinforcement learning improves dialogue system performance.

How Reinforcement Learning Improves Dialogue Systems

Reinforcement Learning (RL) fundamentally transforms dialogue systems from rigid, scripted bots into dynamic, goal-oriented agents. By framing a conversation as a sequential decision-making process—typically a Markov Decision Process (MDP) or a Partially Observable Markov Decision Process (POMDP)—RL allows the system to optimize for long-term success rather than just the immediate next turn.

Here is how RL specifically enhances performance:

-

Automated Policy Optimization: Instead of developers manually writing exhaustive “if-then” rules for what the system should say next, RL enables the system to learn an optimal Dialogue Policy autonomously. Through trial and error (often using simulated users), the agent learns which conversational actions yield the highest rewards.

-

Focusing on Cumulative Goals: While standard machine learning might just try to predict the next word, RL optimizes for ultimate outcomes. It assigns numerical rewards for successful task completion (like booking a flight) or user satisfaction, and penalties for overly long or failed interactions.

-

Robustness to Uncertainty: Human speech is ambiguous and prone to recognition errors. By utilizing POMDPs, RL empowers the system to manage this uncertainty. It maintains a probability distribution over multiple possible user intents (Dialogue State Tracking) and chooses actions that safely clarify or recover from misunderstandings.

5. Why is a statistical data-driven approach needed in dialogue systems

The necessity for a statistical data-driven approach in dialogue systems arises directly from the limitations of traditional rule-based architectures. While rule-based systems function well in narrow, highly controlled domains, they are extremely brittle and labor-intensive to scale. Human conversation is inherently unpredictable, filled with ambiguity, interruptions, and speech recognition errors.

A statistical approach is crucial because it introduces the ability to manage this inherent uncertainty mathematically. Instead of relying on a rigid “if-then” logic path, statistical models treat dialogue as a probabilistic process. Key reasons for this shift include:

-

Managing Ambiguity: Through frameworks like Partially Observable Markov Decision Processes (POMDPs), the system does not commit to a single interpretation of a user’s input. Instead, it uses Dialogue State Tracking to maintain a probability distribution over multiple possible meanings.

-

Automated Learning: Rather than manually anticipating every conversational turn, statistical systems leverage Reinforcement Learning (RL). By interacting with users (or simulators), the system automatically learns an optimal Dialogue Policy that maximizes the chances of a successful interaction.

-

Scalability: Learning directly from massive datasets of human conversation significantly reduces the human engineering effort required to expand the system into new domains.

6. Explain the architecture and working of present-day dialogue systems.

Architecture and Working of Present-Day Dialogue Systems

Present-day dialogue systems typically employ either a modular pipeline or an end-to-end neural architecture to process user inputs and generate appropriate responses.

The standard pipeline architecture operates through a sequence of interconnected components:

-

Automatic Speech Recognition (ASR): If the input is spoken, ASR converts the user’s audio signal into raw text.

-

Natural Language Understanding (NLU): This module analyzes the text to determine the user’s core intent (what they want to achieve) and extracts relevant entities (specific data points, such as a date, location, or name).

-

Dialogue Management (DM): Acting as the central controller, the DM consists of two sub-components:

-

Dialogue State Tracking (DST): Updates and maintains the current context of the conversation using the entire interaction history.

-

Dialogue Policy: Decides the system’s next logical action or response based on the updated state.

-

-

Natural Language Generation (NLG): Translates the abstract action decided by the DM into fluent, human-readable text.

-

Text-to-Speech (TTS): Synthesizes the text response back into spoken audio.

Increasingly, end-to-end neural dialogue systems bypass these explicit, handcrafted modules entirely. Instead, they utilize massive deep learning models to map user inputs directly to generated responses.