View PDF

1. Shannon Capacity

A digital signal is transmitted through a channel that is limited by its bandwidth (in Hertz) and by unwanted noise and interference. The absolute maximum data rate that can be transmitted over this channel without errors is called the Channel Capacity.

Shannon’s Formula:

Claude Shannon proved that this maximum capacity () depends entirely on the available bandwidth () and the Signal-to-Noise Ratio ( or ).

-

S (Signal Power): The strength of the signal, which decreases over distance due to attenuation.

-

N (Noise): Includes thermal noise generated by vibrating atoms in circuits, plus interference from other users.

-

Thermal Noise Formula:

-

Where is the Boltzmann constant ( Ws/K), is temperature in Kelvin, and is the bandwidth.

-

Calculating Signal-to-Noise Ratio (SNR): Because power levels vary drastically (from microwatts to watts), engineers use logarithmic decibels (dB) to represent power ratios simply.

-

-

dBm: Power relative to 1 milliwatt ()

-

dBW: Power relative to 1 Watt ()

Example Calculation: If a channel has a 20 MHz bandwidth at 300 K, the thermal noise is W. If the output signal power is ( W), the SNR is (or 70.8 dB). Using Shannon’s formula, the maximum capacity would be roughly 470 Mbps.

2. Multimedia Data

All real-world information (text, audio, video) must be converted into binary bits (0s and 1s) to be processed digitally.

Advantages of Digital Processing:

-

Lower costs (no need for highly precise analog components).

-

Higher channel efficiency (allows for data compression).

-

High reliability (allows for automatic error correction).

-

Disadvantages: Requires complex circuits and more spectrum bandwidth.

Analogue-to-Digital Conversion (ADC): Converting a continuous analog signal into digital data involves two steps:

-

Sampling: Taking snapshots of the signal’s amplitude at regular time intervals.

-

Nyquist Criterion: The sampling frequency must be at least twice the maximum signal frequency () to avoid losing information.

-

Example: Human voice over telephones (up to 3.4 kHz) is sampled at 8 kHz.

-

-

-

Quantisation: Approximating the sampled amplitudes to discrete digital steps.

-

Using bits provides steps. The quantisation error is limited to half a step size ().

-

A-Law: A logarithmic compression algorithm used in Europe to encode voice efficiently. In ISDN telephony, voice is sampled at 8 kHz and quantised with 8 bits, resulting in a 64 kbps data rate.

-

| n bits | 2n−1 intervals | Error less than ΔA/2 | Relative to (Amax−Amin) |

|---|---|---|---|

| 1 | 1 | 0.500 | |

| 2 | 3 | 0.167 | |

| 3 | 7 | 0.071 | |

| 4 | 15 | 0.033 | |

| 5 | 30 | 0.017 | |

| 6 | 62 | 0.008 | |

| 7 | 126 | 0.004 | |

| 8 | 252 | 0.002 |

Binary Logics & Hardware:

-

Positive Logic: “1” = High Voltage (True), “0” = Low Voltage (False).

-

Negative Logic: “1” = Low Voltage, “0” = High Voltage.

-

Integration Scales: Hardware circuits have shrunk massively over time, categorized by component count: SSI (<100), MSI (<1,000), LSI (<10,000), VLSI (<100k), ULSI (<1M), SLSI (<10M), ELSI (<100M), up to GLSI (Giant Large Scale Integration) with over 100 million components.

3. Data Processing & Boolean Logics

Digital logic gates process binary data using Boolean algebra.

-

AND (): Output is 1 only if both inputs are 1. ()

-

OR (): Output is 1 if either input is 1. ()

-

NOT (): Inverts the input. ()

| AND (∧) | OR (∨) | NOT (¬) |

|---|---|---|

Key Boolean Laws:

-

Commutative:

-

Associative:

-

Distributive:

-

DeMorgan’s Laws: and

-

Shannon’s Law of Inversion: Defines how to invert entire complex logic functions.

-

Absorption:

Engineering Application: To build a lift controller () that goes up only if the door is closed (), the lift is NOT overloaded (, so ), and a button is pressed (), the logic gate circuit would be wired as: .

4. Information Content & Entropy

Information Content (): The amount of information a specific symbol carries is inversely proportional to how often it appears (). A rare letter carries more “surprise” and thus more information.

Entropy ():

The average amount of information across an entire message.

- Max Entropy (): This is only reached if every symbol is used equally.

Redundancy (): Redundancy is the difference between Max Entropy and actual Entropy (). Languages are naturally redundant, which helps us correct errors (e.g., guessing a missing letter in a word).

-

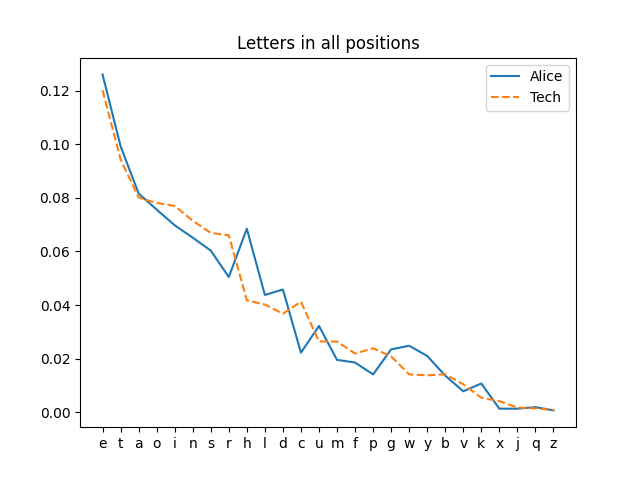

Example: Analyzing an English newspaper article yields an actual Entropy of . Because the letter ‘e’ appears ~14% of the time, the letters are not used equally. Max entropy would be 4.70. Therefore, the Redundancy is .

-

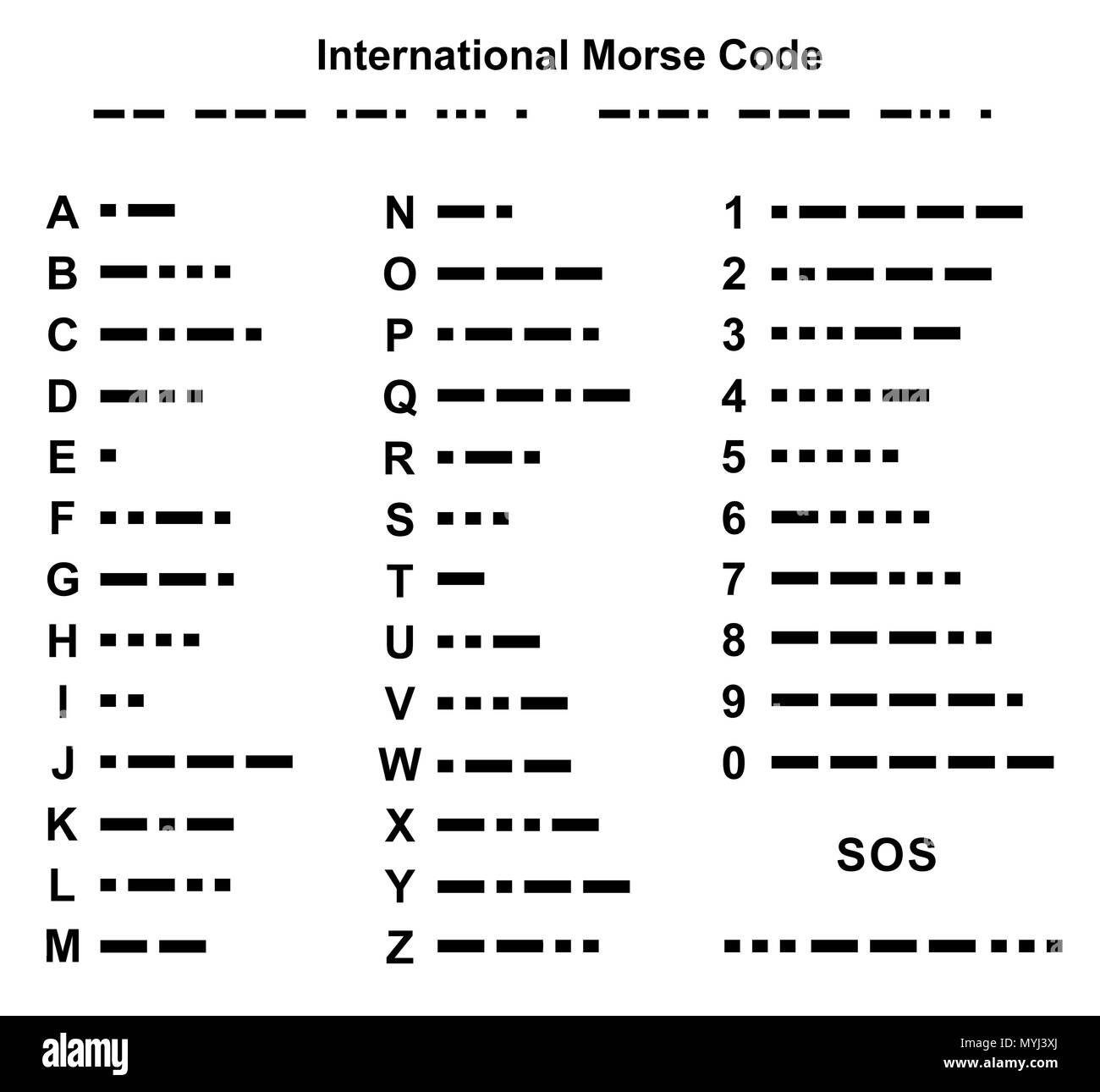

Morse Code: Uses redundancy to its advantage. The most common letter (‘e’) gets the shortest code (a single dot), while rare letters (‘q’) get long codes, creating high efficiency.

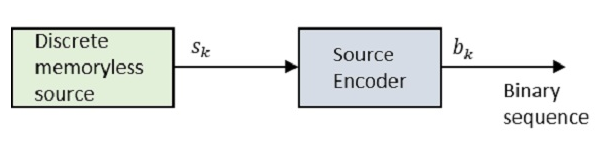

5. Source Coding

Source coding compresses data by removing redundancy and irrelevant details, heavily reducing bandwidth requirements.

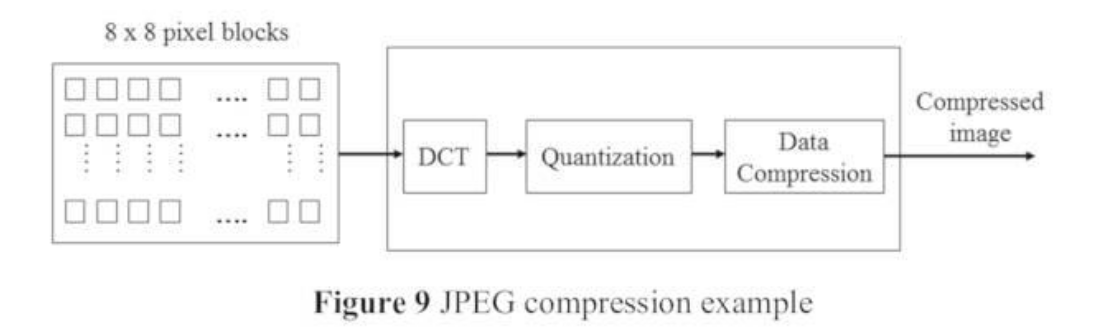

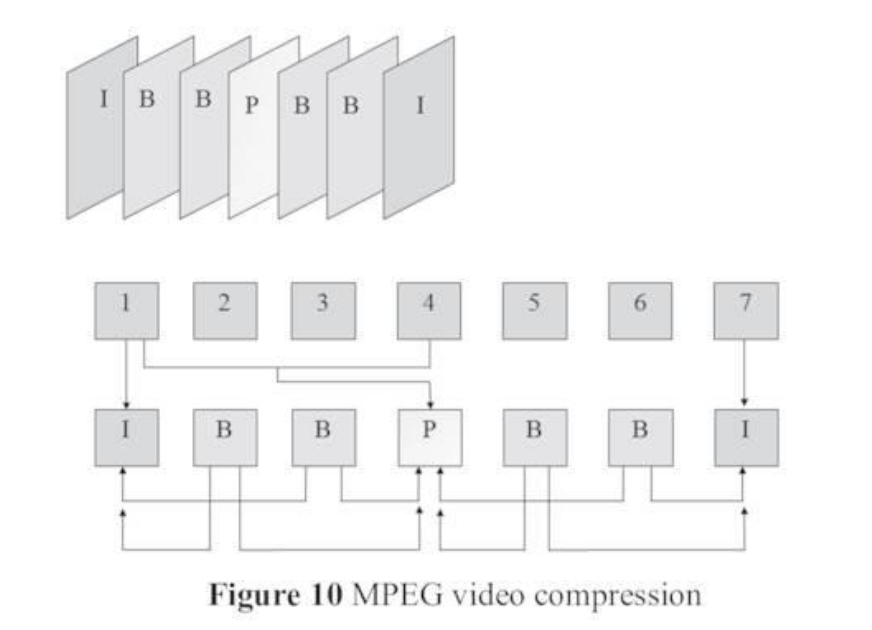

Video Compression (MPEG): Uncompressed video is massive. A 1280x720 video at 50 frames per second using 24-bit color generates over 1.106 Gbps. Compression is mandatory and done in two steps:

- Spatial Compression (JPEG): Compresses each single image frame independently using Discrete Cosine Transform (DCT) to remove irrelevant visual details.

-

Temporal Compression (MPEG): Removes redundant background data between consecutive moving frames. It transmits:

-

I-Frames: Independent, full original pictures.

-

P-Frames: Predicted frames (only stores what changed since the last I or P frame).

-

B-Frames: Bidirectional frames (calculates changes by looking at both past and future frames).

-

By using MPEG-2, the 1.106 Gbps video can be shrunk down to just 27 Mbps.

6. Channel Coding

While source coding removes redundancy, channel coding intentionally adds redundant bits to help receivers automatically detect and fix errors caused by channel noise (FEC - Forward Error Correction), avoiding the delay of asking for retransmissions (ARQ - Automatic Repeat reQuest).

Techniques:

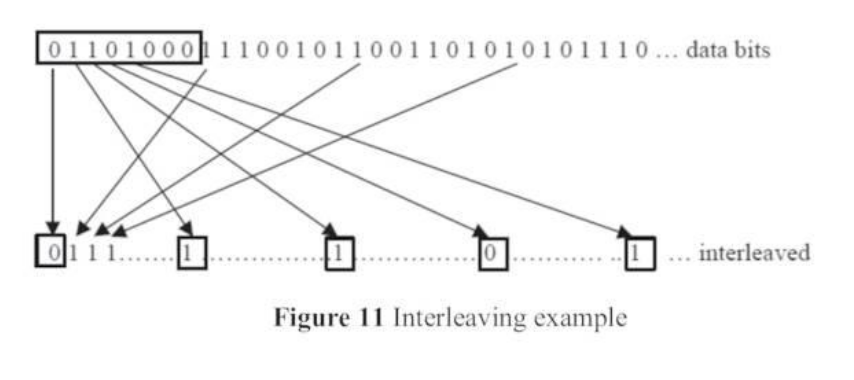

- Interleaving: Shuffles the data bits before sending. If a burst of noise destroys a chunk of bits, the receiver de-interleaves them, spreading the errors out into “single-bit” errors that are much easier to fix.

-

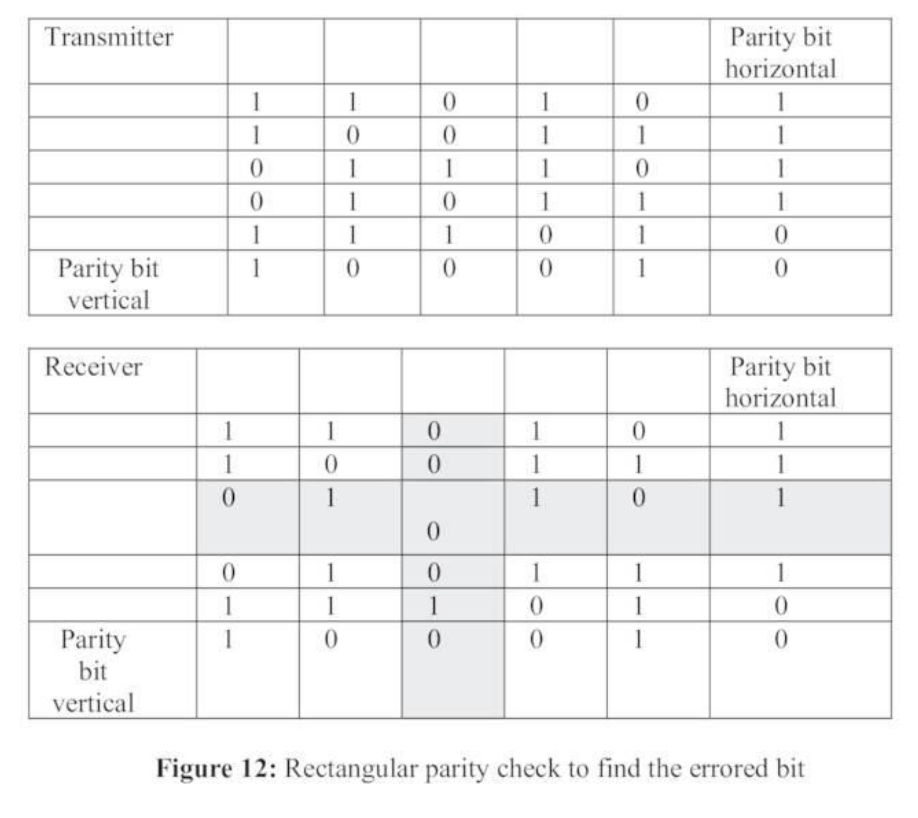

Parity Checks: Adding bits to ensure the total number of 1s is always even.

- Rectangular (2D) Parity: Arranges data in rows and columns with vertical and horizontal parity bits. This grid allows the receiver to pinpoint and flip the exact single broken bit.

-

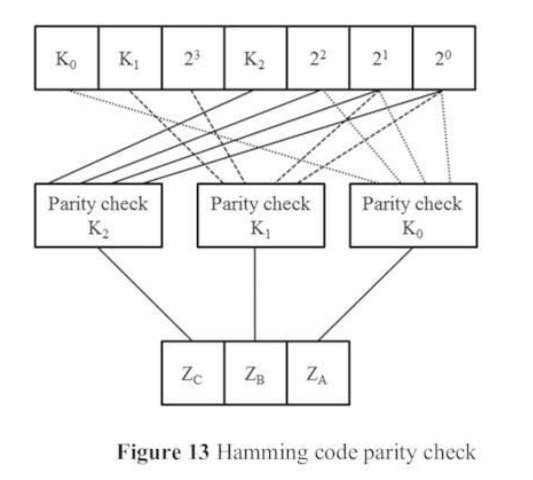

Linear Block Codes: Maps message bits into an -bit code word using a Generator Matrix (). At the receiver, a Parity Check Matrix () generates a “Syndrome” (). If , there are no errors. If , the syndrome mathematically points to the exact error pattern to be corrected.

- Hamming Code: A popular block code that easily fixes single-bit errors.

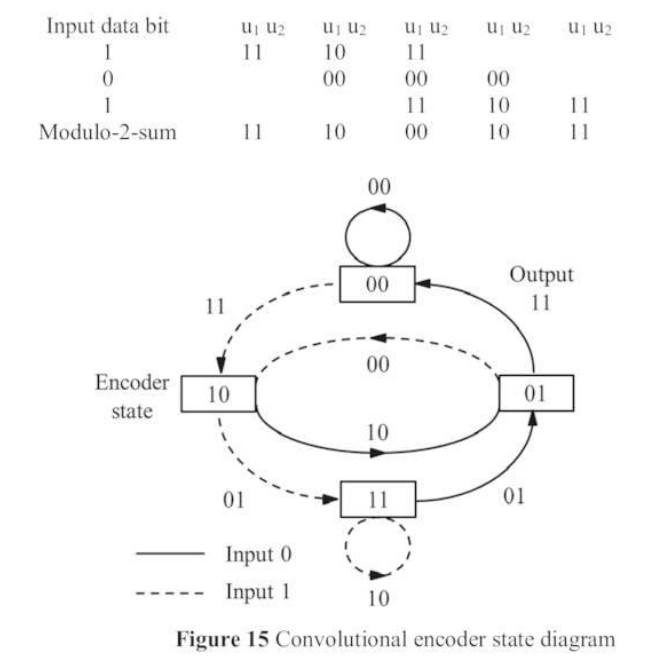

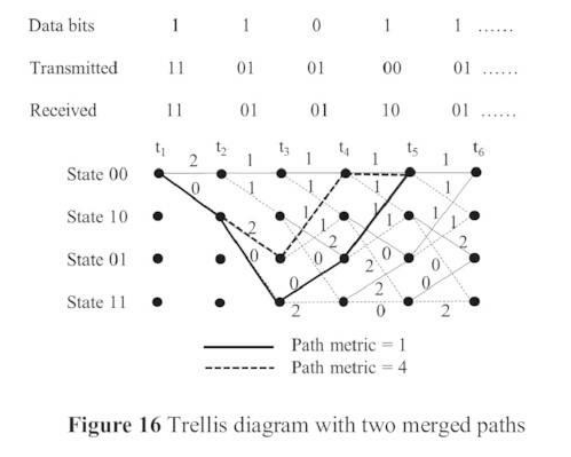

- Convolutional Codes: Unlike block codes, these use shift registers to add “memory”. The output code depends not only on the current input bit but also on the previous bits in the register (defined by constraint length ).

- Viterbi Decoding: A Maximum Likelihood decoding algorithm. It uses a “Trellis Diagram” to map all possible paths the convolutional code could have taken. When paths merge, it discards the paths with high error metrics (Hamming distances) to deduce the original data.

7. Modulation Schemes

Modulation maps digital binary data onto an analog radio or optical carrier wave so it can physically travel through the air or a cable.

Analogue vs. Digital Modulation:

-

Analogue: Varies Amplitude (AM), Frequency (FM), or Phase (PM) in a continuous wave.

-

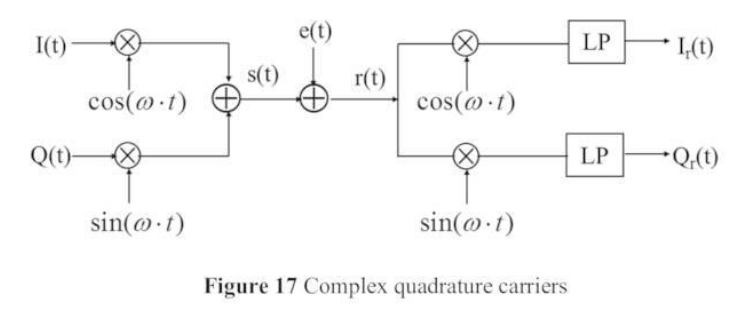

Digital: Varies the baseband In-phase () and Quadrature () components of the carrier wave in discrete, exact steps.

Digital Modulation Types (Constellations):

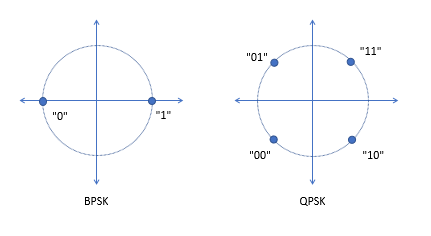

Represented on a “Constellation Diagram”. The points are arranged so adjacent symbols only differ by 1 bit (Hamming distance of 1) to make error correction easier.

-

BPSK (Binary Phase Shift Keying): 2 symbols (). Transmits 1 bit per symbol.

-

QPSK (Quadrature PSK): 4 symbols (). Transmits 2 bits per symbol.

-

8PSK & 16PSK: 8 and 16 symbols ( and ). Transmits 3 or 4 bits by shifting the phase in a circle.

-

QAM (Quadrature Amplitude Modulation): Varies both amplitude and phase. Extremely efficient. (16QAM, 32QAM, 64QAM, 256QAM).

- The Trade-off: 64QAM transmits 6 bits per symbol, making it very fast. However, because the 64 dots are packed tightly together on the constellation diagram, even a tiny amount of noise will cause the receiver to guess the wrong dot. Therefore, higher QAMs require a vastly superior Signal-to-Noise Ratio (Eb/No) to function without high bit error rates.

| Modulation scheme | Spectral efficiency (b/s/Hz) |

|---|---|

| BPSK | 1 |

| QPSK | 2 |

| 8PSK | 3 |

| 16PSK / 16QAM | 4 |

| 64QAM | 6 |

| 256QAM | 8 |

8. Internet

The internet evolved from early Circuit-Switched voice telephone networks into modern Packet-Switched computer networks.

Brief History: It began as the ARPANET, funded by the US Department of Defense during the Cold War to create a resilient, decentralized data network. It exploded into public relevance when Tim Berners-Lee invented the World Wide Web (WWW) at CERN in 1991, allowing easy navigation via hyperlinks.

Key Network Protocols:

-

MAC: The physical hardware address of a device.

-

PPP / PPTP / L2TP: Point-to-Point and Tunneling protocols used by ISPs and VPNs to transport data securely.

-

ARP / RARP: Resolves an IP address to a physical MAC address.

-

DHCP: Dynamically assigns IP addresses to new devices joining a network.

-

OSPF / IGRP / BGP: Routing protocols. They calculate metrics (bandwidth, delay) to find the shortest/best path for data packets across internal and exterior global networks.

-

UDP (User Datagram Protocol): Unreliable transport. Sends packets fast without checking sequence numbers or asking for lost data. Ideal for real-time video/voice where waiting for delayed data ruins the experience.

-

TCP (Transmission Control Protocol): Reliable transport. Tracks sequence numbers and demands retransmission (ARQ) if packets are lost. Crucial for web browsing and file downloads, but adds delay.

-

SMTP & MIME: Protocols for sending emails and embedding structured multimedia (images/video) into them.

Network Scale & The All-IP Future: Networks scale from PAN (Personal, like Bluetooth), to LAWN/LAN (Local/Campus), MAN (Metropolitan), WAN (Nationwide), and GAN (Global/Trans-oceanic).

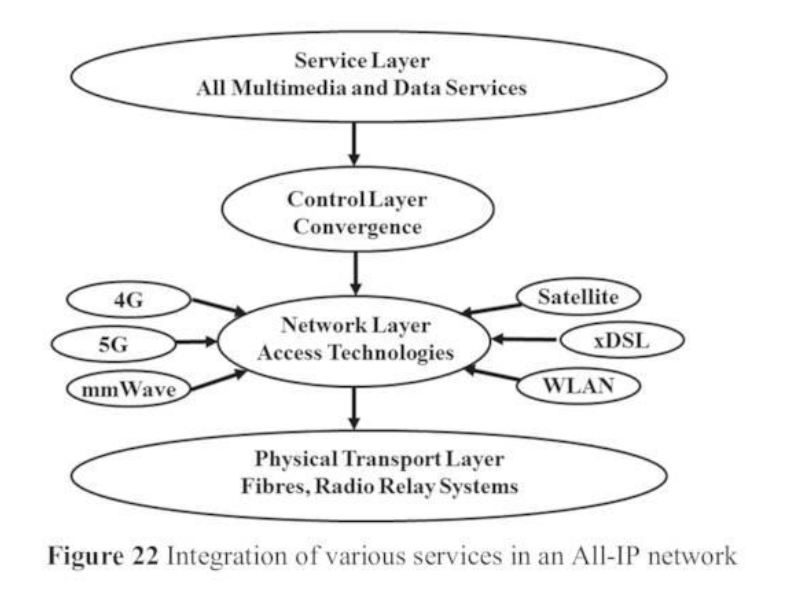

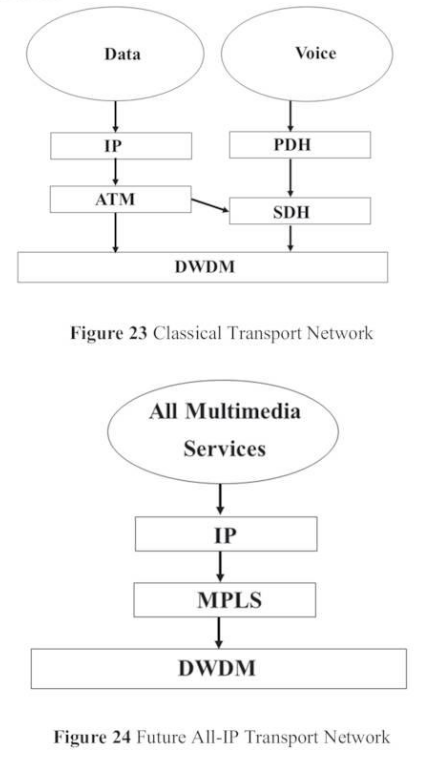

Modern telecommunications are converging onto an All-IP architecture. Instead of maintaining separate networks for TV, Telephone, and Internet, all multimedia services are packetized as IP data and routed over a unified convergence layer, regardless of the physical access technology (5G, Wi-Fi, optical fiber, or satellite) underneath.

Links:

Unit 1 Information Theory and Communication Technologies

Unit 2 Wireless Communication Technologies

Unit 3 Cellular Mobile Networks

Unit 4 Free Space Optical Communications

Unit 5 Network Security and Management